Global artificial intelligence spending is set to surpass $2 trillion in 2026, according to Gartner — nearly doubling from 2025. Yet despite this surge, most enterprises are struggling to turn investment into results. MIT’s GenAI Divide report found that 95% of AI pilots delivered zero measurable impact on the bottom line. The technology works. The strategy around it, for most organizations, does not.

That gap rarely comes down to model quality or engineering talent. It comes down to how companies scope the problem, who owns the initiative, and whether anyone planned for what happens after launch. In short, most leaders treat AI as a one-off IT project. The ones that succeed treat it as a program — one that touches people, processes, and priorities across the business.

This article lays out a Gen AI strategy framework grounded in hands-on implementation experience. It draws heavily on insights from Olga Hrom, Director of Pre-Sales Strategy & Delivery at Master of Code Global, an expert in Gen AI development services and intelligentization programs across several verticals.

Inside, you’ll find practical steps, real red flags, and the kind of nuance that most Generative AI business strategy guides skip — like why a focused two-hour planning session often beats a six-month roadmap, and why even the best solution fails if no one actually uses it. If those are the answers you’re looking for, let’s get into it.

Table of Contents

Key Takeaways

- A Generative AI strategy for enterprises should be treated as an ongoing program, not a one-time project. It demands phased planning, clear ownership, and continuous measurement.

- Start with a specific business pain, not a technology. The strongest initiatives are rooted in real operational problems, not hype.

- Leadership matters more than tooling. Without a senior decision-maker driving the initiative, even well-funded programs lose momentum.

- Organizational readiness is non-negotiable. Misaligned expectations, weak data practices, and underestimated costs are the most common reasons AI programs stall.

- Plan for adoption from day one. Whether your users are employees or customers, adoption needs structure, communication, and time.

- Scaling Generative AI requires intention. Measure what your pilot actually delivered, capture lessons learned, and only then expand to the next use case.

- For most organizations, an implementation partner shortens the path to results, especially for a first major intelligentization initiative.

Why Most Enterprise Gen AI Strategies Fail Before They Start

The pattern is remarkably consistent. A company sees competitors adopting artificial intelligence, feels the pressure to act, and spins up a pilot. A technical team builds something. It ships. And then — nothing. No adoption plan. Unclear success metrics. No roadmap for what comes next. The pilot sits there, technically functional and strategically useless.

This is what Olga Hrom calls the “IT project trap.” Most companies approach AI implementation the same way they’d approach a software upgrade — scoped, built, and handed off. But it isn’t a system you install. It’s a capability you grow. When organizations treat it as a one-time deliverable, they miss the parts that actually determine success: change management, user adoption, and continuous measurement.

The data backs this up. MIT’s 2025 research found that large enterprises run the most AI pilots but have the lowest rates of pilot-to-scale conversion. Mid-market companies, by contrast, moved faster and more decisively — averaging 90 days from pilot to full implementation versus nine months for larger firms. Deloitte’s 2026 State of AI report adds another layer: while 66% of organizations report productivity gains from AI, only 20% are seeing actual revenue growth. The rest are optimizing at the surface without transforming anything underneath.

So where does it go wrong? Three places, typically. First, there’s no clear business problem driving the initiative; just a vague desire to ‘have it’ fueled by trends in Generative AI rather than actual operational needs. Second, no one with real authority owns the program. Third, the organization skips the hardest part entirely: planning for how people will actually use, trust, and integrate the new tool into their daily work. Each of these failures is preventable. But only if you stop thinking of your enterprise AI strategy as a tech project and start thinking of it as organizational change.

For a deeper look at the numbers behind these patterns, explore our breakdown of Generative AI statistics across industries and use cases.

5 Core Areas of Generative AI Strategy for Business Leaders

The right approach depends on your organization’s size, maturity, and goals. But across dozens of programs spanning different industries, Olga Hrom has seen clear patterns in what separates initiatives that deliver from those that stall. Here are the core areas that matter most when integrating Generative AI into business strategy.

#1. Start with Business Pain, Not Technology

The most common mistake? Starting with the tool instead of the problem. Companies hear about a new model or platform, get excited, and rush to build something. But without a clear business pain behind the initiative, even a well-built solution has nowhere to land.

Olga Hrom puts it simply: before choosing any technology, the organization needs to answer one question — what exactly are we trying to fix? Not “where can we use AI?” but “where does it actually hurt?” The answer should be specific, scoped, and tied to a real operational bottleneck. A helpful technique here is the “5 Whys” — a root cause analysis method that pushes past surface-level answers. It doesn’t require weeks of workshops. Often, a single focused two-hour session with the right people in the room is enough to reach that depth.

This also means being honest about where AI is and isn’t applicable. Not every pain point is a good fit. For example, scenario planning with Generative AI might reveal that automating customer support works well for a bank handling thousands of routine requests. But for a luxury hotel brand whose clients expect human interaction, the same use case falls flat. Without context, capability loses relevance.

Once you’ve identified a clear business case, the foundation of your enterprise AI strategy is set. You know what you’re solving, why it matters, and how success could be measured. From there, the conversation shifts from “should we do AI?” to “how do we do this well?”

#2. Secure the Right Leadership and Team Structure

Initiatives don’t fail because of bad engineers. They fail because no one with authority is driving the change. This is one of the most consistent patterns Olga Hrom has observed: when there’s no clear owner at the leadership level, projects drift, stall, or quietly get deprioritized.

This matters because AI implementation isn’t a technical decision. It’s a business decision. Someone needs to align the initiative with company goals, secure buy-in across departments, and have the authority to push things forward when resistance appears. Without that, even a well-funded project stays stuck in pilot mode.

At a minimum, three roles need to be in place:

- AI Visionary — a senior decision-maker who understands the technology, connects it to organizational goals, and has the authority to greenlight experiments.

- Implementation Manager — someone who translates vision into execution. They coordinate timelines, manage vendors, track progress, and keep the project moving.

- Implementation Team — the people who do the technical work. They build, test, and deploy. This team can be outsourced, especially for a company’s first major AI program.

PwC’s 2026 predictions reinforce this. Companies that adopt a top-down, leadership-driven approach to AI-driven strategic planning consistently outperform those that crowdsource initiatives from the bottom up. Enterprise-wide adoption needs direction, not just enthusiasm.

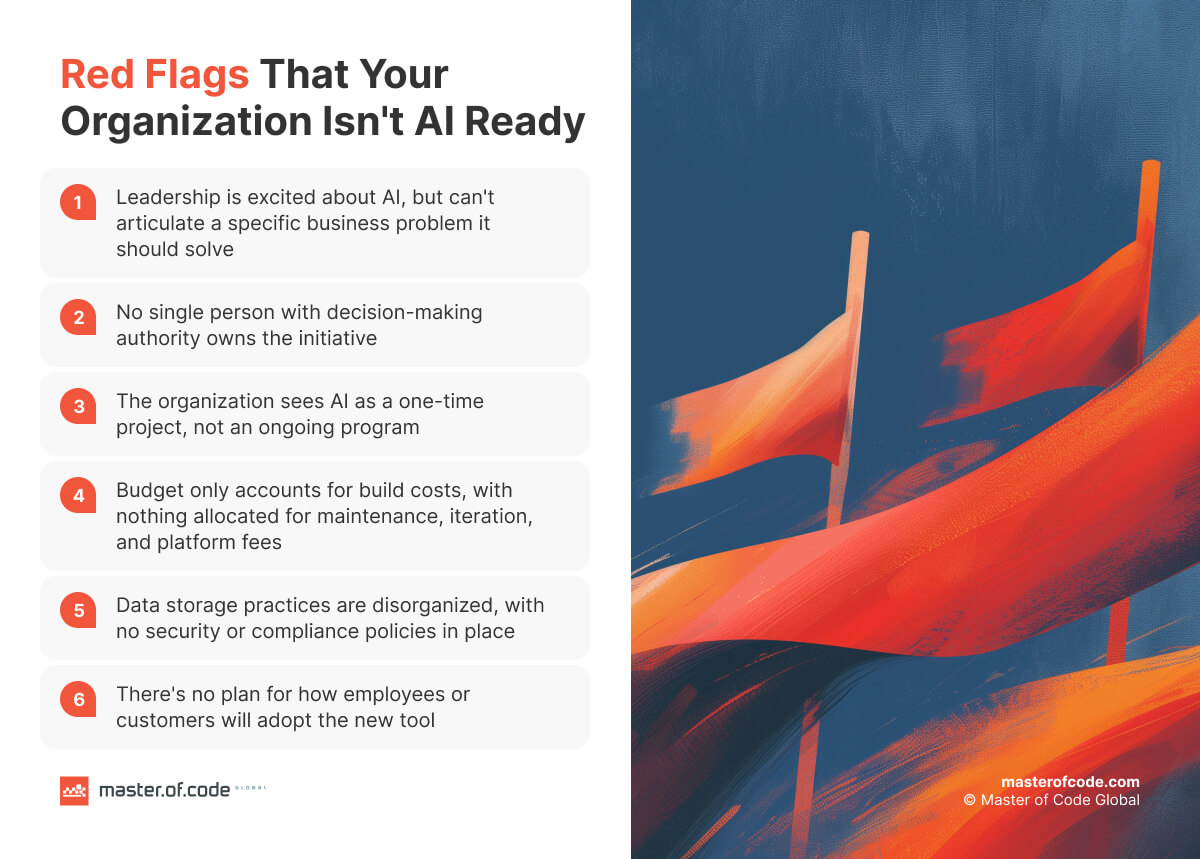

#3. Assess Organizational Readiness (and Be Honest About It)

Not every company that wants artificial intelligence is ready for it. That’s not a criticism; it’s a practical reality. And recognizing it early saves both time and budget.

Olga Hrom has seen a recurring set of red flags that signal an organization needs to do some groundwork before jumping into implementation. They tend to fall into four categories:

- Misaligned expectations about the technology. This cuts both ways, and both are equally harmful. Some leaders overestimate what AI can do, expecting fast results with minimal planning. Others underestimate the effort, treating it like installing a new plugin. Overestimation leads to rushed pilots with no real design behind them. Underestimation results in underfunded projects that lack the resources to scale. A strong Gen AI strategy starts with a realistic understanding of what the technology requires in time, expertise, and ongoing commitment.

- Underestimated costs. Such projects carry ongoing expenses — tokens, platform fees, maintenance, iteration. Many organizations budget as if it were a one-time software purchase. When the real Generative AI cost becomes clear, the gap between expectations and reality can slow momentum significantly. Starting with a smaller pilot or MVP is often the more practical path forward.

- Weak data governance and security. Artificial intelligence doesn’t exist in a vacuum. It sits on top of your existing data infrastructure. If that foundation has gaps — unclear storage policies, missing access controls, no compliance framework — layering AI on top only exposes those problems. As Olga puts it, you can’t build a GDPR-compliant solution on a non-compliant organization.

The underlying principle is similar to renovating a building. You wouldn’t install a smart home system in a house with faulty wiring. The same logic applies here. Artificial intelligence will surface every weakness in your workflows and AI governance practices. Therefore, it’s better to find those gaps before your AI risk management becomes a live problem, not after.

#4. Plan for Adoption from Day One, Not After Launch

You can build the most sophisticated solution on the market. But if the people it’s designed for don’t use it — or don’t trust it — none of that matters. Whether those people are employees or customers, adoption isn’t a post-launch problem. It’s something that needs to be part of the Generative AI business strategy from the very beginning.

This is where the change management lens becomes essential. Launching an intelligent tool is, at its core, introducing change — to workflows, habits, and expectations. Olga Hrom compares it to any major tool migration. Even something as simple as switching to a new messaging platform requires communication, timelines, and follow-up. AI is far more disruptive and deserves at least the same level of attention.

A few fundamentals that apply regardless of who your end user is:

- Communicate early and clearly. Explain what’s changing and why. Ambiguity breeds resistance.

- Define what success looks like. Data-driven decision making starts with knowing what you’re measuring from day one, not after launch.

- Start with a smaller group first. Test with a focused set of early users, gather feedback, and iterate before scaling.

- Assign ownership. Someone needs to track whether adoption is actually happening and flag issues early.

- Give it time. Depending on the complexity, stable usage typically takes three to six months. Rushing that process rarely ends well.

Human-in-the-loop decision making also plays a dual role here. It’s a safety mechanism — keeping people involved in critical outputs. But it’s also a trust builder. When end users see that AI supports decisions rather than replaces judgment, resistance drops.

#5. Move from Pilots to Production with Intention

Pilots are necessary. But they’re not the finish line. One of the biggest traps in Generative AI strategy business implementation is treating a successful pilot as proof that the organization is ready to scale. It’s not. It’s proof that the concept works. What comes next is a different challenge entirely.

Scaling Gen AI means moving from “it works in a controlled environment” to “it delivers value across the business.” Before expanding, make sure you can answer these questions clearly:

- Did we measure what matters? Not just whether the tool functions, but whether it moves a metric. Data-driven decision making is what tells you whether to double down, adjust, or stop.

- Do we know what broke? Every pilot surfaces surprises — edge cases, integration issues, user behavior you didn’t expect. Document those before replicating the approach elsewhere.

- Is our infrastructure ready for growth? A prototype running on a basic setup won’t hold at scale. An AI-ready technology stack with clean data pipelines, secure integrations, and proper monitoring needs to be in place before you expand.

- Do we have a rollout sequence? Scaling doesn’t mean launching everywhere at once. Prioritize the next use case based on business impact, feasibility, and what you’ve already learned.

Every deployment should generate input for the next one. What you learned from your first initiative — about costs, adoption, tech limitations — becomes the foundation for your second. Organizations that skip this step tend to repeat the same mistakes in a new department with a bigger budget.

This is also why scaling Generative AI works better when you think of it as a program rather than a project. A project has a delivery date. A program has phases, feedback loops, and room to evolve. Your enterprise Gen AI strategy should reflect that.

Build vs. Buy: How to Choose Your Implementation Path

Once the Gen AI strategy is in place, the next question is execution. Do you build in-house, or bring in an external partner? The answer depends on where your organization stands today — not where it hopes to be in a year.

MIT’s research offers a useful benchmark here. Vendor-led custom AI projects succeed roughly 67% of the time. Internal builds? About 33%. That gap isn’t about talent. It’s about focus, experience, and the ability to move from concept to production without getting stuck in internal complexity.

That said, building in-house makes sense in certain situations:

- You’ve already completed at least one AI initiative with external support and understand what the process involves.

- Your team has hands-on experience with a background in deploying and maintaining such solutions.

- Your industry’s regulatory or security requirements make it difficult to involve external teams in day-to-day operations.

Outsourcing tends to be the stronger path when:

- You want to reduce risk and accelerate enterprise-wide AI adoption by working with a team that’s done this before.

- This is your organization’s first significant intelligentization initiative.

- You need both strategic guidance and hands-on execution.

- Speed matters, and assembling an internal team would take months.

For organizations starting out, engaging a Generative AI consultant can help define the roadmap before committing to full-scale development. This includes identifying the right use cases, evaluating technical feasibility, and building a realistic timeline — all before a single line of code is written.

Wrapping Up

A Generative AI business strategy isn’t a document you write once and file away. It’s an operating model — one that evolves as your organization learns, scales, and adapts. The companies that treat it this way consistently outperform those chasing the next shiny pilot.

The pattern behind successful enterprise-wide AI adoption is surprisingly consistent. It starts with a real business problem. It’s led by someone with authority and supported by a capable team. It accounts for organizational readiness and plans for adoption before launch — not after. And it treats every deployment as a learning opportunity that feeds the next one.

None of this requires a massive upfront investment or a year-long planning cycle. It does require honesty about where your company stands, clarity about what you’re solving, and the discipline to approach AI-driven strategic planning as a long-term program rather than a quick win.

If you are ready to move from strategy to execution, Master of Code Global can help. As an implementation partner and Generative AI integration services provider, we bring both the strategic guidance and delivery capacity to turn your vision into working solutions — from initial AI business strategy consulting through development and deployment. Reach out to start the conversation.