In January 2026 alone, voice AI startups raised $1.23 billion. ElevenLabs closed $500M at an $11B valuation, Parloa hit $3B, Decagon reached $4.5B, and Deepgram secured $130M. When capital concentrates at this density in a single month, it signals something deeper than hype; voice AI is crossing from product feature to enterprise infrastructure.

Yet most organizations still struggle to extract real value from the shift. McKinsey’s 2025 State of AI found that 88% of companies use AI in at least one function, but only 6% qualify as high performers. An MIT study puts it more bluntly: 95% of GenAI pilots deliver little to no measurable P&L impact. The gap isn’t awareness of voice AI trends; it’s turning them into outcomes.

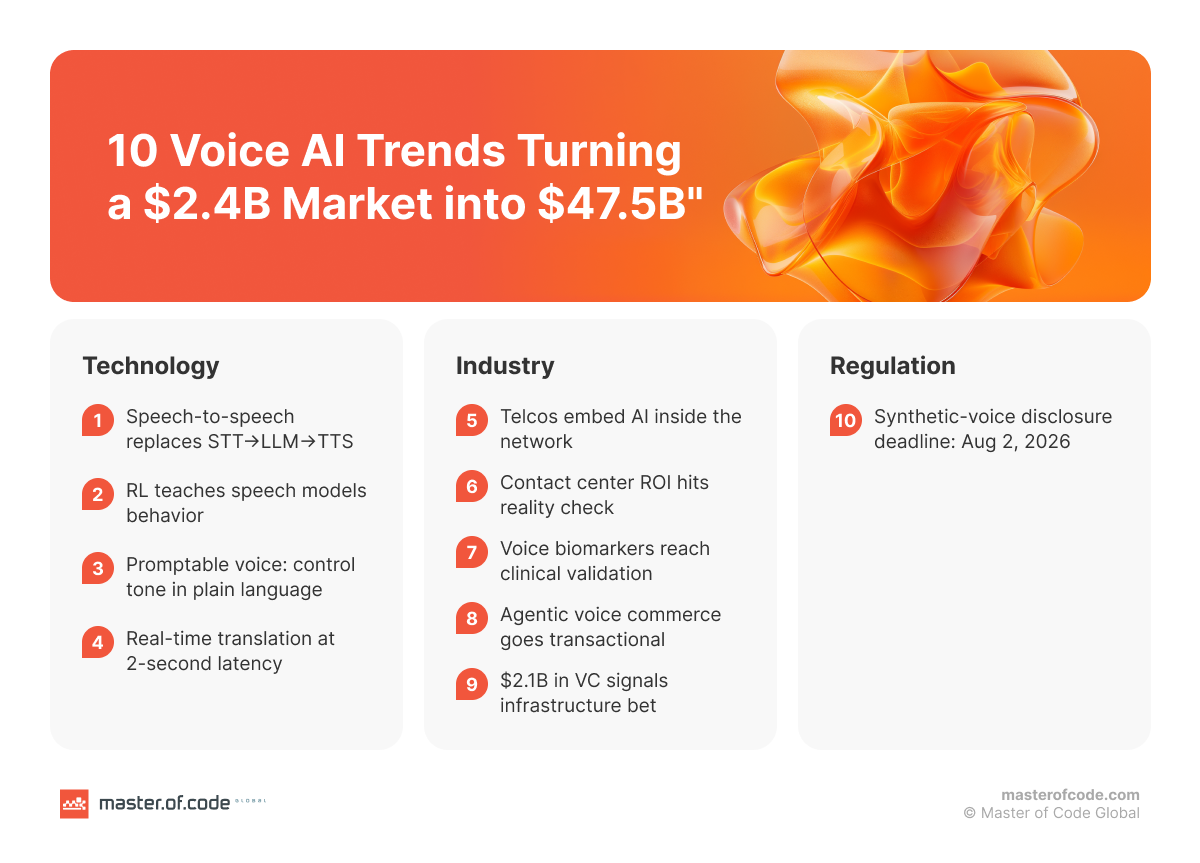

This article bridges that gap. We break down 10 Сonversational AI trends 2026 across three layers: the technology upgrade reshaping your omnichannel stack, the industry forces redefining where value gets captured, and the regulatory deadlines your team can’t afford to miss. Think of it less as a trend list, more as a translation layer between what’s changing and what to do about it.

Table of Contents

Voice AI Trends Reshaping the Stack

How End-to-End Speech-to-Speech Models Are Replacing the Classic Pipeline

For years, building a voice AI system meant stitching together three separate components: a speech-to-text engine, a large language model for reasoning, and a text-to-speech engine for output. Each handoff introduced latency, error compounding, and integration overhead. If the STT misheard a word, the LLM reasoned on garbage, and the TTS faithfully spoke nonsense back.

That cascaded pipeline is now giving way to what may be the most consequential voice AI technology trends shift of the year: end-to-end speech-to-speech models that process audio in and push audio out through a single inference loop, with native function calling built in.

Voila demonstrated 195ms response latency in May 2025, faster than the average human reaction time, with over a million pre-built voices generated from just 10-second audio samples. Moonshot AI’s Kimi-Audio packs recognition, understanding, and generation into one 7B-parameter model pretrained on 13 million hours of audio, outperforming models 18x its size.

OpenAI’s gpt-realtime, which reached general availability in August 2025, ships audio-native processing with SIP phone integration at $32 per million input tokens, roughly 20% cheaper than the preview. And NVIDIA’s PersonaPlex, accepted at ICASSP 2026, pushed the multimodal boundary further with zero-shot voice cloning and text-based persona conditioning, so agents can adopt specific voices and roles in real-time.

For your business, this collapses complexity. Fewer handoff points mean lower integration costs with existing CRM systems and enterprise workflows. QA gets simpler when there’s one model to monitor instead of three. LLM-powered systems that unify reasoning and generation in a single pass turn real-time AI voice assistants from lab concept into production reality, and it’s one of the voice AI trends 2026 with the most immediate ROI potential.

When Speech Models Learn Behavior: RL and Promptable Voice Reshape Control

Until recently, customizing a voice AI agent meant retraining models or commissioning bespoke “projects,” weeks of work for every new tone, accent, or domain. Two parallel shifts are dismantling that bottleneck, and they share the same root: speech models are gaining behavioral control without fine-tuning.

The first shift is reinforcement learning migrating from text into speech. Researchers are importing GRPO (Group Relative Policy Optimization) techniques from the text LLM world directly into audio. Kyutai’s Hibiki-Zero uses GRPO to optimize simultaneous translation policy, teaching the model when to listen versus when to speak, without word-level aligned training data.

B-GRPO applies the same approach to emotion recognition, achieving a 19.8% improvement over non-RL baselines without any labeled emotion data. These models aren’t just generating audio. They’re learning judgment.

The second shift is promptable voice. Just as large language models in voice AI became steerable through system prompts, speech models now accept natural-language instructions for how they sound and behave.

AssemblyAI’s Slam-1 lets developers pass 1,000-word context prompts to customize transcription on the fly – 66% of human evaluators preferred it over prior models. ElevenLabs v3, generally available since February 2026, supports expressive audio tags like [excited], [whispers], and [sighs], while cutting errors on numbers and technical notation by 68%. And Deepgram’s Flux integrates end-of-turn detection directly into its Conversational Speech Recognition model, cutting latency to the first token by 50%.

Together, the mentioned voice AI trends make this layer as programmable as the text layer. Sentiment analysis becomes genuinely context-aware through RL rather than rigid rule sets. Hyper-personalization scales across different touchpoints because switching a voice persona now means editing a prompt, not rebuilding a model.

This is the kind of transition that demands a conversation design partner with deep prompt-engineering expertise, and it’s why teams working with Master of Code Global are already building voice prompt playbooks (tone libraries, domain templates, turn-taking configs) alongside their traditional dialog flows.

Real-Time Translation Hits Production-Grade, and It Preserves Your Voice

Language barriers in customer service have always meant one of two things: hire native speakers for every market, or accept a degraded experience. That calculus is changing fast. Google deployed speech-to-speech translation in Google Meet at I/O 2025 with just a 2-second delay while preserving the speaker’s voice, tone, and expression. By December 2025, the technology expanded to 70+ languages inside the Google Translate app on Android.

Meanwhile, Kyutai’s Hibiki-Zero showed that adapting a translation model to an entirely new language now requires fewer than 1,000 hours of speech data, down from datasets orders of magnitude larger. Real-time translation is migrating from a “meeting feature” you toggle on to an invisible layer inside your communications stack, embedded in UCaaS, CCaaS, and CRM integrations. Multilingual support stops being a staffing problem and starts reshaping core business processes across global operations. But that infrastructure carries data privacy questions: translated content crosses jurisdictions, and the compliance implications of processing voice data through third-party translation layers vary country by country. Factor that into your evaluation before procurement, not after.

Voice AI Industry Trends

Telcos Embed AI Inside the Phone Network

The most surprising voice AI trends 2026 didn’t come from Silicon Valley. They came from telecom operators. Deutsche Telekom unveiled its Magenta AI Call Assistant, an AI layer inserted directly into cloudified voice network infrastructure that provides live translation, conversation summaries, and contextual help during ordinary phone calls. Say “Hey Magenta” while discussing dinner plans, and the assistant suggests restaurants and checks availability in real-time.

In South Korea, LG U+ launched ixi-O, a network-level AI agent that detects spam, identifies voice phishing by analyzing call context, and lets users summon real-time information search mid-call. This is structurally different from app-layer voice assistants.

Telcos ship AI where the traffic already lives – no new software deployment, no additional integration into your enterprise workflows. But that convenience carries a trade-off worth scrutinizing:

- Data governance. Who owns the conversation data when AI processing happens at the carrier level?

- Enterprise-grade security. What are the encryption and retention policies for AI-analyzed calls?

- Compliance exposure. If your carrier’s AI summarizes a call containing sensitive customer data, which jurisdiction’s privacy rules apply?

Contact Centers: The $80 Billion Reality Check

2026 is the year Gartner’s bold prediction hits its deadline – Conversational AI would reduce contact center agent labor costs by $80 billion across 17 million global agents. Some numbers suggest it’s working. A Forrester study of PolyAI found 391% ROI with average savings of $10.3 million per enterprise customer.

But the full picture is more sobering. Forrester’s 2026 predictions warn that one-third of companies will damage customer experiences with frustrating AI self-service, and enterprises will delay 25% of planned AI spend into 2027 over ROI concerns. Deloitte’s survey of 3,235 leaders across 24 countries found that while 74% aspire to grow revenue through AI, only 20% are succeeding, and just 14% have agentic AI ready for deployment.

At Master of Code Global, we see this tension firsthand. Clients often come to us after an initial voice AI deployment hit impressive deflection numbers but quietly eroded customer satisfaction. The pattern is predictable: the voice AI customer service trends that look great on a dashboard — call deflection, handle time reduction — can mask the absence of real personalization, along with rising repeat-call rates and silent churn. That’s why our teams build sentiment tracking into closed-loop QA systems from day one, not as a reporting afterthought.

What Separates Hype Metrics from Operational Accountability

- Track what matters. Containment rate, customer effort score, repeat-call rate, and agent-assist effectiveness tell a fuller story than deflection volume alone.

- Design fallback paths as first-class features. Every automated flow should have a graceful, context-aware escalation to a human.

- Budget for iteration, not just deployment. The cost of getting voice self-service wrong outweighs the savings of getting it live.

Voice AI in Healthcare: From Calls to Clinical Insights

Voice Biomarkers Enter Clinical Validation

Twenty-five seconds of speech. That’s all it took for an ML model to detect signals consistent with moderate-to-severe depression, with 71.3% sensitivity and 73.5% specificity, in a study of 14,898 adults published in the Annals of Family Medicine. It’s the largest clinical validation study ever conducted for voice-based depression screening.

Meanwhile, research in The Lancet demonstrated that Wav2Vec2-based models can detect mild cognitive impairment from unstructured conversations, language-independent, operating on acoustic features rather than word meaning, enabling passive screening at population scale. The healthcare voice AI vertical is growing at 37.3% CAGR through 2030, with 70% of US medical groups reporting positive outcomes, one of the strongest voice AI market trends outside the contact center.

Voice is no longer a UX convenience in healthcare. It’s becoming a diagnostic layer. But that elevation brings heavier scrutiny: data privacy, consent protocols, and clinical validation governance need to be in place before, not after, deployment.

Agentic Voice Commerce Goes Transactional

On the commerce side, such agents are crossing from answering questions to closing transactions. SoundHound debuted Amelia 7 at CES 2026, an agentic voice commerce platform for vehicles, TVs, and smart devices where AI agents order food, make reservations, pay for parking, and book tickets entirely through voice. The company processed 30 million AI-driven customer interactions in 2025 alone. This is the shift from Conversational AI as an information layer to agentic systems that execute business processes end-to-end.

For enterprise teams evaluating where to pilot, the priority is clear: map your transaction workflows where AI voice bot solutions can reduce friction, and make sure enterprise-grade security covers every step from authentication to payment confirmation.

Capital Markets Signal Infrastructure-Level Confidence

Voice AI venture funding jumped from roughly $315 million in 2022 to $2.1 billion in 2024 — nearly 7x growth. Over 200 startups raised $1.5 billion-plus in 2025 alone. A new unicorn class has emerged in months: ElevenLabs at $11B, Decagon at $4.5B, Parloa at $3B, Deepgram at $1.3B.

Big tech is buying in too: Google acquired Hume AI for emotional intelligence in Gemini, Meta acquired Play AI for conversational voice, and Apple paid $2 billion for Q.ai to bring on-device audio processing and health metrics to its wearables.

The market projection, from $2.4 billion in 2024 to $47.5 billion by 2034 at 34.8% CAGR, confirms what the deal flow already suggests. The voice AI market trends are unambiguous: investors aren’t funding it as a feature bet, but as infrastructure across CCaaS, healthcare, telco, retail, and automotive. Platform economics are taking hold.

What this means for procurement: evaluate voice AI vendors the way you’d evaluate any infrastructure partner: platform durability, API flexibility, compliance-readiness, and ecosystem depth. And factor consolidation risk into your strategy. In a market moving this fast, today’s point-solution vendor may be tomorrow’s acquisition target.

Regulation & Compliance: The New Boundary Conditions

August 2, 2026: The Deadline Every Voice AI Team Should Circle

If there’s one of voice AI trends 2026 no team can afford to ignore, it’s regulatory convergence. Across Brussels, Washington, and Beijing simultaneously: if it sounds human but isn’t, you must say so.

EU AI Act

- Article 50(1): AI systems interacting with humans must disclose their AI nature at the start of every interaction.

- Article 50(2): synthetic audio must be marked in a machine-readable format via watermarking or metadata.

- Penalties: up to €35 million or 7% of global annual revenue.

- Deadline: August 2, 2026. First draft Code of Practice published December 17, 2025; final code expected June 2026.

- Already in effect since February 2, 2025: emotion detection AI prohibited in workplace contexts, including analyzing employee calls.

United States

- FCC declared AI-generated voices illegal in robocalls (February 2024). Landmark $6 million fine for deepfake robocalls impersonating President Biden.

- TAKE IT DOWN Act (signed May 2025): criminalizes AI deepfakes; platform takedown requirements effective May 2026.

- NO FAKES Act (pending): federal IP right in one’s own voice, surviving death for 70 years.

- 46 states have enacted deepfake legislation; 146 bills introduced in 2025 alone.

China

- AI Labeling Measures (effective September 1, 2025): explicit audio prompts at the beginning or end of AI-generated audio, plus implicit metadata labels.

- 2025 “Qinglang” campaign: 3,500+ problematic AI products handled, 960,000+ pieces of illegal content removed.

What This Means for Your Voice Stack

Every voice agent, IVR, or automated call system now requires disclosure mechanisms. Synthetic content needs labeling at both generation and distribution. Enterprise-grade security extends into provenance tracking, who generated the audio, when, and with what model.

And here’s the uncomfortable wrinkle: regulation assumes synthetic audio can be reliably detected. It often can’t. A Northwestern University study found detection models lose 43% of their performance against realistic modern voice cloning.

Your Compliance Playbook Before August 2026

- Audit all customer-facing voice touchpoints for disclosure compliance.

- Label synthetic audio with metadata tagging and watermarking as a standard pipeline step.

- Assemble a cross-functional compliance team: legal, product, engineering, CX.

- Document everything: consent flows, data retention, model provenance, escalation procedures.

Organizations that treat compliance as a trust differentiator will earn customer confidence while competitors scramble. At Master of Code Global, our ISO 27001-certified delivery process means compliance architecture is built into voice AI projects from the first sprint.

From Trend Awareness to a 90-Day Playbook

The voice AI trends 2026 that create real business value share three things in common: they can be operationalized, measured, and governed. Architecture shifts unlock new capabilities across omnichannel enterprise workflows. Industry dynamics across telcos, contact centers, healthcare, and agentic commerce define where that value actually lands. And regulation in the EU, US, and China sets the boundaries everyone must design within.

The future voice AI trends and innovations point in one direction. Conversational AI’s next chapter is agentic, multimodal, and compliance-aware by default. The $1.23 billion that flowed into voice AI in a single month of January 2026 wasn’t a bet on hype. It was a bet on enterprise workflows about to be rebuilt from the ground up.

So here’s the play: pick 2–3 voice AI trends from this article that matter most to your vertical. Build a 90-day roadmap, evaluate vendors, run a focused pilot, measure what moves. If you want a partner who’s done this over 1,000 times across finance, healthcare, eCommerce, and automotive, talk to Master of Code Global. We’ll help you turn the trend that fits into the outcome that counts.