Most companies chasing artificial intelligence right now are spending real money on tools that were never part of a real plan. The result is a growing stack of pilots that impress in demos and stall in production with no clear answer for why.

This guide is for the people responsible for fixing that. It covers what a working enterprise AI strategy actually requires in 2026, why most approaches break down before they deliver, and what the organizations generating genuine value from AI do differently. What you will find here is not theory. It is what our teams have learned from building intelligent solutions across industries, company sizes, and levels of organizational readiness. Let’s get right into it.

Table of Contents

Key Takeaways

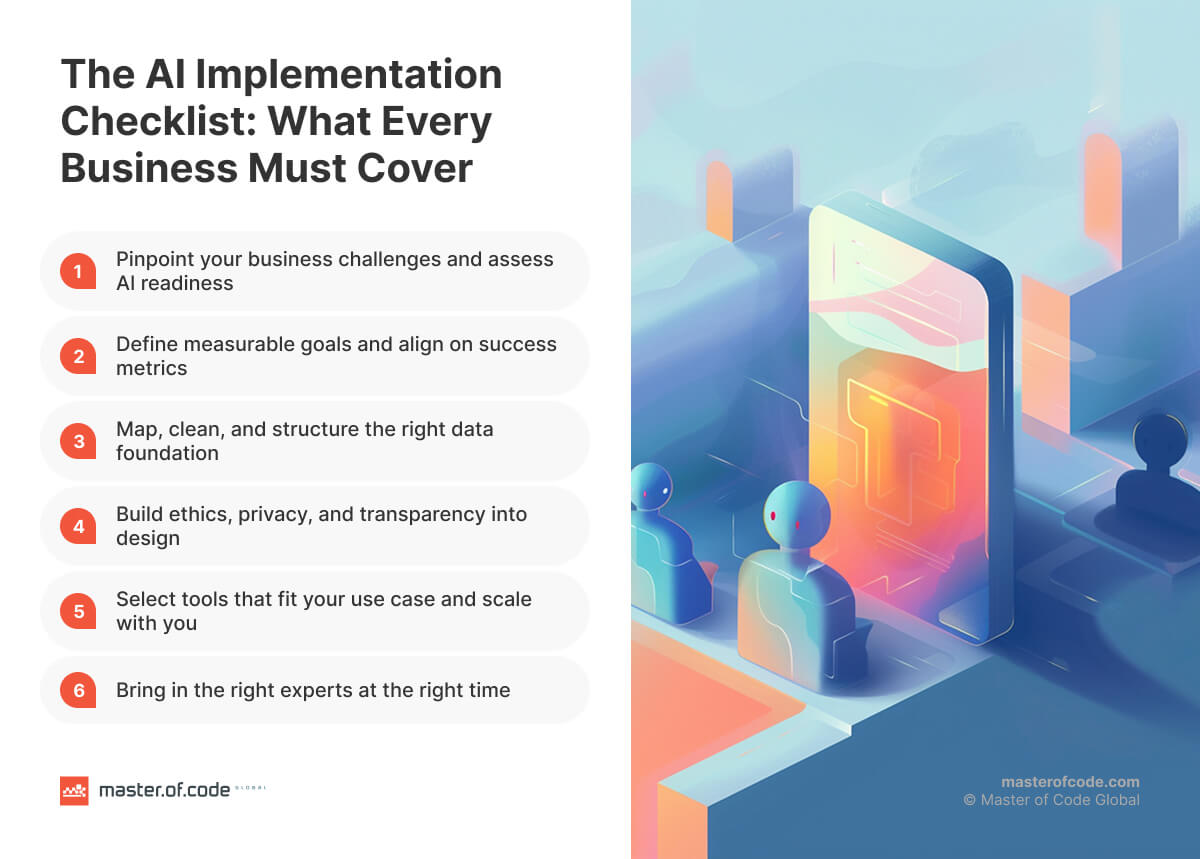

- Having tools is not the same as having a strategy. An enterprise-wide strategy ties every initiative to a business outcome – revenue, cost, or risk – from the start.

- Before designing a strategy, audit where the organization genuinely stands. The level you are at determines the approach you need, not the one you want.

- No single framework works for every context. The best strategies combine portfolio-level prioritization, maturity-stage discipline, and execution rigor – adjusted for where the company actually is.

- Most failures trace back to the same root causes: initiatives disconnected from business priorities, data that was never ready, and change management that arrived too late.

- Use case prioritization and workflow redesign before deployment are the two practices most strongly correlated with actual value creation.

- Competitive advantage goes to organizations that treat AI as a long-term capability built around real systems and real constraints, not a technology trend to keep pace with.

What Is an Enterprise AI Strategy

Most organizations using artificial intelligence today don’t have a specific strategy. They just have tools powered by this tech.

There’s a remarkable difference. Deploying a copilot, running a proof of concept, or automating a single workflow are useful starting points, but they’re not strategy.

An enterprise AI strategy is a coordinated, organization-wide plan that ties investment to concrete business objectives. It spans use case selection, data infrastructure, governance, workforce readiness, and how the company is structured to sustain artificial intelligence at scale. Without that connective tissue, individual initiatives stay isolated. They deliver local wins that never compound.

It also helps to distinguish three related concepts that often get conflated:

- AI strategy – what you will do with the technology, and why.

- AI operating model – how the organization is structured and governed to deliver it.

- Enterprise AI roadmap – the sequenced, time-bound execution plan.

Strategy comes first. The operating model and roadmap follow from it, not the other way around.

Yet only 40% of organizations have a formal strategy in place, according to Deloitte’s 2026 State of AI report. The rest are making tool-by-tool decisions without a coherent enterprise-wide strategy to guide them. That’s a structural problem, not a technology one. And it explains, more than anything else, why adoption is nearly universal while meaningful business impact remains rare.

AI Data Collection Flow

We built an AI agent that transforms network troubleshooting from a manual, time-intensive process into an intelligent conversation.

Check out case study

GiveaWhy Platform

Master of Code Global developed two interconnected digital platforms to drive mentorship and donation—with automation and trust at the core.

Check out case study

Predictive Maintenance

Master of Code Global developed an intelligent AI-powered predictive maintenance platform that monitors equipment health in real-time.

Check out case studyAI Maturity Assessment: Know Where You Stand Before You Plan

Building a strategy without first assessing where your enterprise stands is one of the most common – and costly – mistakes. It leads to mismatched ambitions, misallocated budgets, and the phenomenon consultants call “pilot purgatory”: a graveyard of promising experiments with no value creation potential (95% of of organizations are getting zero return).

An AI maturity assessment is the honest audit that prevents this. It tells you not what you want to do with artificial intelligence, but what you’re actually ready to do with it right now.

Most maturity models map organizations across four broad levels:

- Level 1. Exploring: AI use is experimental and isolated. There’s no defined ownership, data is siloed across systems, and initiatives depend on individual enthusiasm rather than organizational structure.

- Level 2. Building: Pilots are actively underway. Early governance is forming, AI leadership is emerging, and the organization is starting to learn from its first deployments.

- Level 3. Scaling: Artificial intelligence is integrated into core workflows with measurable outcomes. Cross-functional collaboration is in place, and the enterprise has a repeatable process for moving from pilot to production.

- Level 4. Leading: The tech is embedded in the business model itself. Governance operates as infrastructure, and the company runs continuous learning loops that improve performance over time.

The level you’re at determines the strategy you need, not the one you want.

A thorough audit examines four dimensions: data readiness (quality, accessibility, and governance), technical capability (infrastructure and integration architecture), organizational readiness (leadership alignment and AI literacy), and culture (how the organization handles change and failure). Skipping any one of them produces a blind spot that surfaces later at a much higher cost. As Gartner’s 2025 research found, 57% of companies say their data is not AI-ready. Yet most skip the data preparedness dimension entirely in early planning.

As Olha Hrom, Director of Pre-Sales Strategy & Delivery at Master of Code, puts it: “Before committing to any technology, the organization needs to answer one honest question – what exactly are we trying to fix?” That question only gets a reliable answer when the assessment is done first.

Enterprise AI Strategy Framework: What the Best Approaches Have in Common

Dozens of frameworks exist, published by management consulting firms, technology companies, research institutions, and practitioners. They differ in naming, sequencing, and emphasis. But when you examine them side by side, the same structural logic emerges.

Every credible framework addresses four core pillars:

- Data governance and readiness – the foundation without which no model performs reliably in production.

- AI operating model and change management – who owns it, how decisions are made, and how the organization adapts at scale.

- Business alignment – connecting investments to revenue, cost, risk, or growth objectives.

- AI governance framework and ethical AI – risk management, ethical guardrails, regulatory compliance, and oversight.

What the research also consistently shows is that the ratio of effort matters as much as the components themselves. Companies that dedicate roughly 70% of their AI resources to people and process change, 20% to technology and data infrastructure, and 10% to algorithms tend to outperform those that invert this balance. Most enterprises do the opposite – heavy on tooling, light on the organizational work that makes tooling stick.

Four Framework Types and What to Know About Each

Rather than cataloguing individual frameworks by name, it is more useful to understand the four structural types they fall into. Each has a distinct logic, and each has genuine trade-offs.

Type 1. The Pillar Model

It defines five or six strategic dimensions that must be developed in parallel. The underlying philosophy is that AI transformation cannot succeed if any single dimension is neglected.

Core characteristics:

- Covers strategy, talent, operating model, technology, data, and adoption simultaneously.

- Assumes all dimensions are interdependent and must progress together.

- Requires a dedicated cross-functional leadership team to manage each workstream.

- Typically runs on an 18 to 36-month strategic horizon.

Strength: Comprehensive by design. It forces leaders to address organizational capability gaps alongside tech decisions, which is where most initiatives actually break down.

Limitation: Running all pillars at once requires significant executive sponsorship and resources. For mid-market organizations or those early in their journey, this model can feel paralyzing before it feels empowering.

Type 2. The Maturity Progression Model

This type maps the organization’s journey from experimentation to full AI integration across defined stages, with specific readiness criteria at each transition point.

Core characteristics:

- Organizes AI development into sequential, clearly defined levels.

- Each stage has entry conditions before the next begins.

- Progress is measured against capability benchmarks, not just time elapsed.

- Governance and data infrastructure requirements increase with each level.

Strength: Gives organizations a realistic, stage-appropriate path. It prevents skipping steps that appear cosmetic but are structurally necessary, and aligns expectations across leadership teams that may have very different starting assumptions.

Limitation: Without deliberate urgency, this model can create a “wait until we’re ready” culture that delays meaningful action indefinitely. Readiness is built through doing, not just preparing.

Type 3. The Portfolio and Investment Model

This one categorizes AI investments by strategic intent, then sequences them through a value-versus-feasibility prioritization lens.

Core characteristics:

- Separates initiatives into buckets: protect existing operations, extend competitive position, pursue new business models.

- Uses scoring matrices to rank use cases by commercial impact, technical feasibility, and time-to-value.

- Balances short-term wins against longer-term capability building.

- Connects each commitment directly to a measurable business outcome.

Strength: Translates AI ambition into financial terms leadership teams can act on. This makes board-level conversations more productive and helps companies avoid concentrating budget in low-return experiments while underfunding the use cases with real competitive advantage potential.

Limitation: This model requires genuine strategic clarity about the company’s direction to function well. Organizations without a defined competitive position often struggle to determine which bets are worth making.

Type 4. The Lifecycle and Execution Model

It breaks AI strategy into sequential phases, from discovery and data readiness through build, validation, and scale.

Core characteristics:

- Defines clear graduation criteria for moving from pilot to production.

- Maps delivery phases to team structures, tooling decisions, and governance checkpoints.

- Emphasizes measurement cadence – what gets tracked at 30, 90, and 180 days.

- Treats each deployment – whether custom AI solutions or integrated platforms – as a product with an owner, not a project with a deadline.

Strength: Highly actionable. Practitioners working inside delivery cycles tend to find this the most useful model because it maps to how real implementation actually works, not how it looks on a strategy slide.

Limitation: The emphasis on execution can come at the expense of strategic thinking. Companies that adopt this model without business alignment at the leadership level risk building the wrong things with considerable efficiency.

What the Best Strategies Actually Do

The organizations generating real value from AI rarely commit to a single framework type. What works in practice is a combination:

- the strategic clarity of the portfolio model to determine what to build and in what order,

- the stage-appropriate discipline of the maturity model to set realistic expectations,

- the execution rigor of the lifecycle model to move from decision to deployment.

There is also a dimension that most frameworks underweight in 2026: enterprise AI agents.

When intelligent systems can take autonomous actions rather than simply generating outputs, governance requirements, operating model design, and human-in-the-loop policies become structurally more complex. The frameworks built around Generative AI business strategy do not automatically extend to agents. Enterprises building an AI portfolio today need to account for this distinction early, before governance gaps become compliance risks.

In practice, this might look like:

A logistics company developing its enterprise AI strategy might begin by mapping business pain points to strategic objectives, identifying three high-priority areas where AI can reduce cost or improve speed. It then assesses data readiness and talent gaps across its operating divisions before sequencing a 12-month roadmap that delivers one measurable win in demand forecasting within 90 days, while building toward a larger supply chain optimization program.

That combination of strategic framing, maturity, honesty, and phased execution is what separates a functioning strategy from theory.

Best Practices for Winning Enterprise AI Strategy Implementation

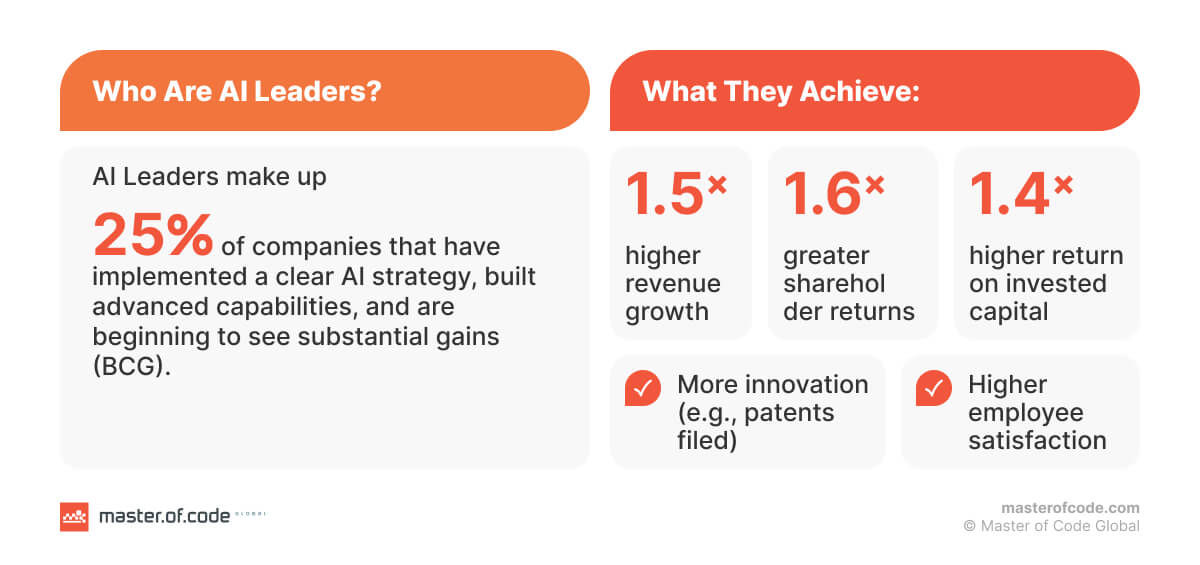

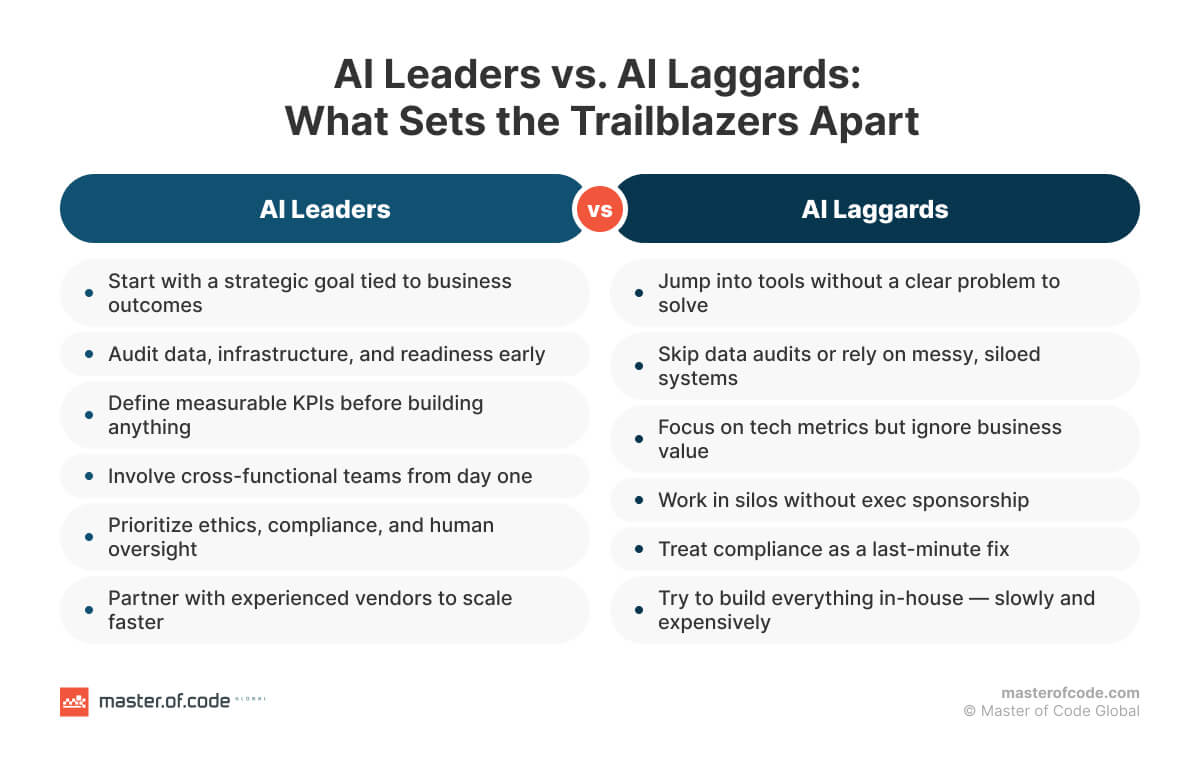

BCG’s research puts it plainly: artificial intelligence leaders achieve 1.7× revenue growth, 3.6× three-year total shareholder return, and 1.6× EBIT margin over laggards. The difference is not budget size or model sophistication. It is a set of repeatable practices applied consistently across strategy, data, people, governance, and measurement.

Strategy and Alignment

- Start with business pain, not technology. The strongest enterprise strategies define the problem before selecting any solution.

- Set growth objectives alongside efficiency targets. Companies that prioritize revenue and innovation, not just cost reduction, see the highest impact from investments.

- Make the strategy bidirectional. Business goals shape the AI agenda, but emerging capabilities should also inform and update direction.

- Treat the AI strategy as a living document. Organizations that revisit and refresh it quarterly outpace those that treat it as a one-time planning exercise.

- Connect every initiative to a named outcome with a measurable target before development begins.

Data and Infrastructure

- Allocate at least half of the implementation timeline and budget to data readiness before model selection.

- Build unified data governance from the start. Trustworthy, repeatable, auditable AI outputs depend on it.

- Treat data as a product, not an IT prerequisite. Each business unit should own and maintain its data layer rather than rely on a central function to prepare it on request.

- Break down silos early. Eleven fragmented sources cannot support a coherent AI portfolio; consolidation is a strategic decision, not a technical one.

People and Change Management

- Redesign workflows before implementing artificial intelligence. This is the single strongest predictor of AI impact. Deploying tech into unchanged processes amplifies existing inefficiencies rather than eliminating them.

- Plan for adoption from day one, not after launch. Organizations that build adoption programs into the strategy phase achieve significantly higher utilization rates than those that treat it as a post-deployment activity.

- Provide structured, role-specific training. Employees with fewer than five hours of AI training show negligible behavior change.

- Meet people where they are. The “adapt or die” framing consistently backfires. Leaders who build AI fluency through support and demonstration, rather than pressure, see faster and more durable usage.

Governance and Responsible AI

- Integrate artificial intelligence controls into existing risk and compliance structures from the start. Parallel governance functions are slower to deploy and less likely to sustain.

- Use an established risk management framework as a baseline. Models such as NIST AI RMF’s four-function cycle – Govern, Map, Measure, Manage – give teams a structured, auditable starting point.

- Govern agentic AI separately. Autonomous systems require dedicated access controls, behavioral monitoring, and human-in-the-loop policies that go beyond Generative AI governance framework.

- Apply responsible AI principles at the design stage, not as a post-launch review.

Measurement and ROI

- Define ROI checkpoints at 3, 6, and 12 months before the first line of model code is written. Organizations that do this are significantly more likely to reach scale

- Track both hard and soft KPIs. Hard: labor cost savings, error reduction, revenue impact per use case. Soft: employee adoption rates, decision quality, organizational agility

- Report in business language, not model language. “Claims processing cost reduced by $2.3M in Q2” secures the next investment cycle. “Model accuracy improved by 12%” rarely does.

- Use a three-tier ROI framework: realized returns in the 18 to 36-month window, directional proof points at 3 to 12 months, and capability value as an ongoing strategic measure.

As Thomas Davenport, Distinguished Professor at Babson College and Senior Advisor to Deloitte’s AI Practice, notes:

“The businesses and organizations that succeed with AI will be those that invest steadily, rise above the hype, make a good match between their business problems and the capabilities of AI, and take the long view.”

How Master of Code Global Approaches Enterprise AI

When companies come to us, they are rarely at the same starting point. Some are running their first pilot. Others already have tools in place that are not delivering the impact they expected. In both cases, the work begins the same way: understanding what role AI should realistically play in the organization before anything is built or changed.

We do not treat implementation as a fixed recipe. Our approach operates at the program level – helping clients evaluate use cases, assess readiness, and determine where experimentation makes sense and where it does not. Sometimes the right move is to build. Other times it is to pause, audit what already exists, or narrow the scope before going further.

From a delivery standpoint, we are platform-agnostic by design. Rather than recommending a specific tool or model, we focus on fitting the solution into the client’s existing systems, data landscape, and operating model. In enterprise environments where infrastructure, security, and compliance requirements are non-negotiable, that flexibility is foundational.

Noam Bardin

Founder

We had a great experience with Master of Code Global — the speed in which they built up a multidisciplinary team including eng, design, QA, TPM allowed us to quickly roll out our product while hiring an internal team.

Check case study

Axelle Basso Bondini

Senior Manager Marketing

Master of Code Global worked on a tight turn-around, was extremely helpful and professional in developing all components of the project up to the brand’s high standards.

Check case study

Matt Meisner

VP, Performance Marketing

The chatbot not only drove $500,000 in revenue within the first few months but also achieved 3X better conversion than the website and an 89% user response rate. Master of Code Global proved to be creative and technical experts in the chatbot space.

Check case studyExecution is handled by a dedicated, cross-functional team that stays with the initiative over time. Continuity of our enterprise AI development services is intentional. As context builds around your business processes, constraints, and past decisions, delivery becomes faster and more predictable. Lessons learned do not disappear with team changes, and you are not re-explaining the same decisions every few months.

Transparency is also a core part of how we work. We involve client teams in decision-making, explain trade-offs, and document why certain choices are made. The goal is not dependency. It is building internal understanding of how custom AI development services actually works in practice, from planning and validation through to production and iteration.

This is the difference between adopting artificial intelligence and operationalizing it well. Master of Code Global combines strategic guidance with hands-on delivery expertise to help enterprises build solutions that align with real workflows, real constraints, and real performance expectations. Because we work across custom, platform-based, and hybrid models, we are able to recommend what is most effective for the business, not what is most convenient to sell.

Reach out to discuss how to create enterprise AI strategy tailored to your business.