Picking the wrong tech partner doesn’t just slow you down. It can set your entire program back by a year. Nearly 3 in 4 CIOs now regret a major AI vendor decision made in the last 18 months. Not because the technology failed them. Because the partner did.

So if you’re trying to figure out how to choose an AI development company and the process feels murkier than it should, you’re not imagining it. The market is flooded with vendors who all sound credible, all have polished decks, and all promise transformation. Telling them apart takes more than a shortlist and a few demos.

This guide cuts through that. You’ll find practical criteria, real questions to ask, the red flags that actually matter, and a straight look at what good AI implementation partners do differently. All of it is backed by people who’ve been through the process themselves, so you don’t have to learn it the hard way.

Table of Contents

Key Takeaways

- The right AI development company brings domain expertise, technical transparency, and a clear approach to deployment and monitoring, not just a polished demo.

- Red flags to watch for: vendors who only speak in success stories, skip validation on complex use cases, give vague answers on data privacy, or can’t provide verifiable references.

- Before signing anything, ask hard questions about data ownership, model flexibility, team composition, post-deployment support, and exit terms.

- The best partners start with your problem, leave your team stronger, and treat the work after launch as seriously as the build itself.

What to Look for in an AI Implementation Partner

Not every firm calling itself an AI vendor actually is one. And that’s exactly what makes knowing how to choose an AI development company so important. Yexi Liu, CIO at Rich Products, put it plainly after evaluating dozens of providers:

Many Generative AI vendors claim they offer an end-to-end AI solution. But the reality is many of these companies are still in the early stages.

And he’s right. A lot of what’s marketed as deep AI expertise is, as Sandeep Agrawal, Legal Technology and Alliances Leader at PricewaterhouseCoopers, puts it, just “a thin wrapper around GPT.”

So what should you actually be looking for?

#1 Domain Experience Over Generic Capability

A vendor delivering custom AI development services in your industry will already understand your data constraints, compliance requirements, and workflow logic. That context shortens timelines and reduces the back-and-forth that kills momentum early on, and has a direct impact on the cost of implementing AI. Ask for case studies in your vertical, not project descriptions, but documented work with measurable outcomes.

#2 Technical Depth You Can Verify

Ask to speak with the engineers, not just the account team. A solid partner can walk you through their model selection rationale, explain how they handle data quality issues, and describe their data readiness assessment process before a single line of code is written.

Gartner analyst Arun Chandrasekaran recommends going further: ask which models they’re currently using and whether they have the flexibility to swap them out. “The model they’re using today won’t be the model they’ll use 12 months down the line,” he points out. Lock-in on a specific model is a risk that compounds quietly over time.

#3 Technology Agnosticism

Closely related, but distinct. A strong AI partner doesn’t walk in with a preferred tool and fit your problem to it. They evaluate options based on your use case, test different approaches as needed, and advise on what fits best. Ask whether they’ve worked across multiple LLM providers and model architectures. Ask if they run comparative evaluations before committing to a stack.

As Olga Hrom, Director of Pre-Sales Strategy & Delivery at Master of Code Global, puts it:

We’re not vendor-locked, so we can recommend the best combination of technologies for your specific situation rather than forcing you into a single platform’s constraints.

This allows us to orchestrate a best-of-breed tech stack tailored to the client’s existing infrastructure, ensuring seamless integration and the ability to scale or swap components as your requirements evolve.

#4 Deployment and Monitoring Maturity

This one gets skipped in most vendor conversations, but it shouldn’t. Ask how the company approaches MLOps or LLMOps for Generative AI projects. That might include model deployment pipelines, performance tracking, data quality check-ups, retraining schedules, prompt versioning, and hallucination oversight. A team that can’t describe their approach clearly is likely building solutions that work in demos but degrade quietly in production.

Another sign of a mature AI development company is proprietary tooling built from real project experience. For example, at Master of Code Global, seeing the same bottlenecks across several projects led us to build LOFT – our open-source LLM orchestration framework. It enables us to reduce initial project setup effort by 43% and save up to 20% of budget when scaling before MVP.

#5 Built-In Security and Compliance

In regulated industries, this is table stakes. Look for verifiable certifications – ISO, SOC 2, GDPR, or sector-specific standards like HIPAA or PCI DSS. This requirement came up repeatedly in The State of AI in Financial Services, a report by Master of Code Global and Infobip based on 200 executive insights.

A Senior Product Manager at a B2B Software Company was direct about it: “Vendor evaluation now hinges on visible proof of compliance. Without this transparency, vendors are unlikely to win approval from CIOs or compliance officers.” Certifications aren’t mere paperwork as they signal how a company thinks about risk before anything goes wrong.

#6 A Track Record You Can Independently Verify

Logo walls are easy to build. What you want is the ability to speak with actual past clients – ideally in a similar industry or with a comparable use case. Do their published case studies show real outcomes or just project timelines? Did the solution scale beyond the initial deployment? A vendor confident in their work will make those conversations easy to have.

For example, our testimonials comprise full names, companies, and links to case studies, no digging required. See the work, verify the results, reach out directly if you’d like. Take a look at yourself.

Noam Bardin

Founder

We had a great experience with Master of Code Global — the speed in which they built up a multidisciplinary team including eng, design, QA, TPM allowed us to quickly roll out our product while hiring an internal team.

Check case studyAxelle Basso Bondini

Senior Manager Marketing

Master of Code Global worked on a tight turn-around, was extremely helpful and professional in developing all components of the project up to the brand’s high standards.

Check case studyMatt Meisner

VP, Performance Marketing

The chatbot not only drove $500,000 in revenue within the first few months but also achieved 3X better conversion than the website and an 89% user response rate. Master of Code Global proved to be creative and technical experts in the chatbot space.

Check case study#7 Team Composition and Seniority

Who sells the project and who builds it are often two different people. Ask specifically: which team members will be assigned to your project, what is their seniority level, and will they remain on it through delivery? Mid-project turnover is one of the most common – and least discussed – reasons AI initiatives lose momentum. It’s a simple question that reveals a lot about how the company actually operates.

For instance, when clients opt for the AI managed dedicated team model at Master of Code Global, they can review CVs and meet the proposed experts before work begins. But the bigger value is what that team brings with them. Specialists who’ve worked across dozens of projects carry practical knowledge that no documentation captures. Real edge cases, integration pitfalls, model quirks – that kind of expertise transfers directly into your project from day one, shortening time to value.

#8 Engagement Model Flexibility

The right partner meets you where you are.

That might mean starting with a focused AI proof of concept (PoC) to test assumptions before any major investment, a fixed-scope project, or a longer-term embedded team, depending on complexity. If a vendor pushes the same engagement structure regardless of your situation, that tells you something about whose interests they’re serving.

As Olga Hrom, our Director of Pre-Sales Strategy & Delivery, puts it:

AI is not a feature — it’s a transformation. That’s why you need to move in small, validated steps. A proof of concept helps you learn fast, fail fast, and avoid spending months building something your end users won’t even use.

#9 Post-Deployment Support

Custom AI development doesn’t end at launch. Models drift, data changes, and business requirements evolve. The right partner treats ongoing support and model monitoring as part of the engagement, not an afterthought or an upsell opportunity.

As one Director at a UK Conversational AI company noted in our The State of AI in Financial Services report: “Successful programs are treated like hiring a new employee: requiring structured onboarding, ongoing coaching, and performance tracking. Even strong platforms fail if delivery partners can’t execute.”

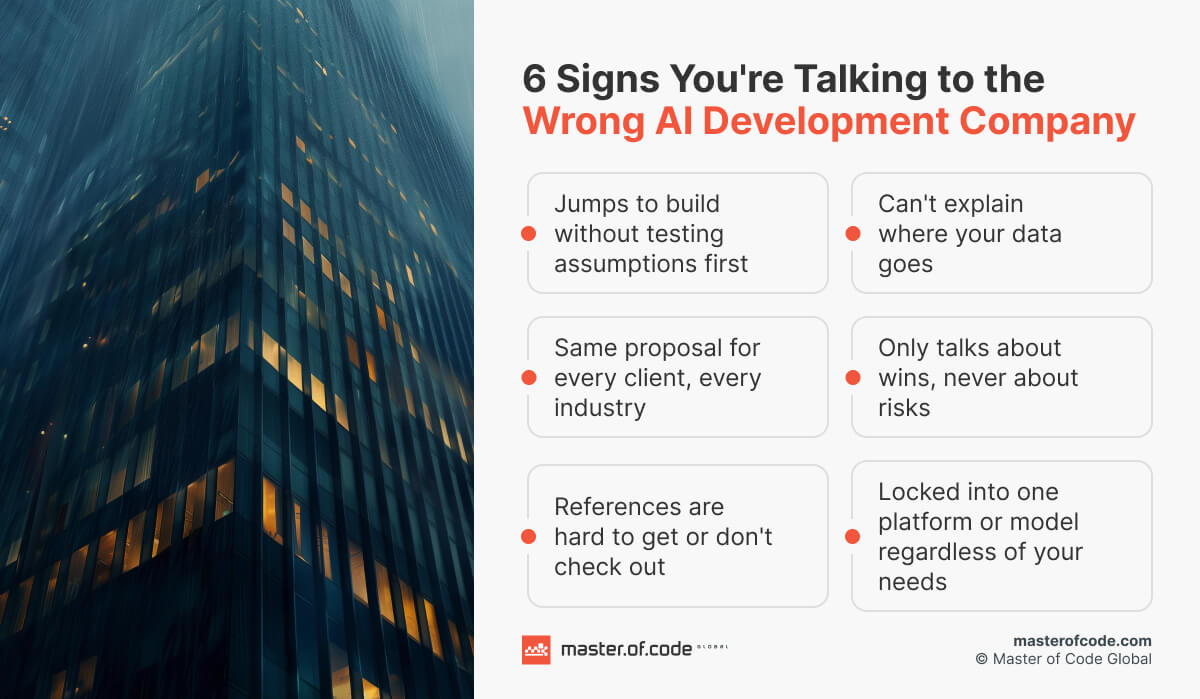

4 Red Flags to Watch for When Evaluating AI Development Companies

Some vendors are easy to dismiss. Others are convincing right up until the moment they aren’t. These are the patterns worth watching for before you sign anything.

They only speak in success stories

- Every question about risk, failure, or limitations gets deflected rather than addressed.

- Pre-sales conversations feel one-sided – all upside, no tradeoffs.

- There’s no acknowledgment of what could go wrong, what will require effort on your end, or where the solution has boundaries.

The vendor that won Ibrahim Khoury’s confidence at Alight Solutions did it by being the only one willing to say: “You are going to need to put people and processes in place to make sure that the system works. You’ll have to train it, monitor it, and invest money in making sure that it works.” No other vendor said that.

They skip validation on complex use cases

- For novel integrations or use cases not widely tested in your industry, they push straight to full AI business implementation.

- No proof of concept is proposed, even when the technical unknowns are significant.

- The conversation jumps to timelines and budget before assumptions have been tested.

As Bogdan Sergiienko, CTO at Master of Code Global, puts it:

We check first against business goals — does this work or not? If it does, planning the rest becomes much easier.

Vague answers on data privacy

- Can’t clearly explain where your data goes, how it’s stored, or who can access it.

- Deflects compliance questions with marketing language rather than tech specifics.

- No verifiable certifications or documented security frameworks to point to.

Frank Luzsicza, CEO of Blue Ink Security, frames the test simply: “If they give you buzzwords, vague reassurances, or complex technical explanations without actual answers — that’s your red flag.”

They promise a complete AI package from day one

- The proposal looks identical regardless of your industry, data maturity, or infrastructure.

- No discovery questions were asked before the solution was defined.

- Everything sounds frictionless, inevitable, and ready to deploy.

The Managing Director at an international technology consultancy put it plainly: “The greatest danger is moving too quickly without a clear strategy. Rapid adoption can increase both operational and regulatory exposure.”

Key Questions to Ask During Vendor Due Diligence

One of the harder parts of knowing how to choose an AI development company is spotting the vendors that are convincing right up until the moment they aren’t. These are the questions that separate prepared answers from honest ones.

On Data and Security

- Where exactly does our data go, and who has access to it at each stage?

- Will our data be used to train or improve your models?

- What certifications do you hold, and can you map them to our exact compliance requirements?

- Can the solution run within our own cloud environment or on-premise infrastructure?

Arun Chandrasekaran from Gartner recommends getting specific:

Ask about the measures vendors take to ensure that data remains private and isn’t used to train and enrich their models. How is the prompt data stored in their environment? Can I run it in my own virtual cloud?

On Technology and Architecture

- Which models or tools are you planning to use, and why those specifically?

- Have you evaluated alternatives, and what made you rule them out?

- How do you handle model monitoring and retraining once in production?

- What does your MLOps process look like for a project of this complexity?

In our AI in Finance Services report, a Product Manager at an American retail bank described how their organization handled this internally: “Leadership demanded clarity on where data goes, how it’s stored, and how secure it is end-to-end.” That same rigor applies to the technical stack itself, not just the data layer.

On Process and Delivery

- Who will be working on this project, and what is their experience level?

- How do you handle scope changes mid-project?

- What does your discovery and PoC process look like before full development begins?

- How do you define and measure success? Do you work with clients to build an ROI framework before development begins?

Stephen Backholm, Founder of True Cedar LLC, recommends pushing beyond the technical and asking about the total cost of ownership. Beyond the initial cost, ask about ongoing costs like support, updates, or subscription fees. It’s not just about the tech aspects of the AI, but also how the vendor supports you.

On Vendor Stability and Contracts

- How long have you been delivering AI implementations, not software development generally?

- Can you connect us with two or three clients from a similar industry?

- What happens to our data and codebase if we end the engagement?

- What does your exit clause look like, and do we retain full IP ownership?

This last point matters more than most buyers realize. Andy Thurai, VP and Principal Analyst at Constellation Research, is direct about it: “There’s a possibility some of these vendors can go bankrupt in no time. They might quickly burn through their cash and go belly up. Or one of their systems gets hacked, and you don’t want to have your calls go through there anymore.” Building an exit into the contract isn’t pessimism. It’s vendor due diligence done properly.

What the Best AI Implementation Partners Do Differently

There’s a version of cooperation that feels transactional – you define a scope, they build it, they leave, sidelining post-deployment support. The companies that get the most out of development and AI integration services tend to end up with something different. Here’s what that actually looks like in practice.

#1. They start with your problem, not their solution

The best partners spend more time asking questions in early conversations than talking about their capabilities. They want to understand your workflows, your data reality, and what success looks like in your specific context before any architecture decisions are made.

Chirag Agrawal, Senior Software Engineer at Amazon, describes what gets missed when this doesn’t happen:

I’ve worked with partners who were brilliant in AI models but lacked a deep understanding of the regulatory nuances and data governance frameworks specific to our industry. That gap creates hidden risks, particularly when it comes to compliance and explainability.

#2. They treat industry context as load-bearing

Generic AI implementations – the kind that could be dropped into any company in any sector – tend to underperform against ones built around the distinctive limitations of your domain.

Hrishi Pippadipally, CIO of Wiss, puts it directly: “Many firms understand AI tools at a surface level, but what truly matters is the ability to contextualize AI within the nuances of a specific industry. Deploying AI without understanding regulatory and client-data constraints leads to failed pilots. A strong partner bridges this gap by knowing not only the technology, but also how it applies to your business model, compliance requirements, and workflows.”

#3. They leave you stronger, not dependent

A partner worth keeping doesn’t build a black box and hand you the keys. They document their work, share knowledge with your internal team, and make sure your people know what was built and why.

As Chirag Agrawal notes: “The best AI consultants don’t just hand over code; they help upskill teams, build trust in AI outputs, and leave the organization stronger, not dependent.”

#4. They’re honest about what comes next

The most useful thing a partner can do after a successful first phase is tell you clearly what it revealed. What assumptions held, what didn’t, and what the logical next step actually is versus what the most ambitious next step would be. Too few do.

Olga Hrom describes what happens instead:

Most companies look at AI implementation as an IT project. A technical team builds something, ships it, and that’s it. But there’s no adoption plan, no development roadmap, and often no one even uses it. The solution might be technically solid, but if nobody thought about what happens after launch, it just sits there.

#5. They’ve built the ecosystem fluency to make things connect

AI solutions rarely live in isolation. They need to talk to your CRM, your data pipelines, and your existing tools. A partner with broad platform experience and genuine vendor independence can recommend the right combination of technologies rather than defaulting to whatever they know best. That kind of flexibility, especially on integration and model selection, is what separates fancy custom AI development in a demo from an application that performs in your actual environment.

Before You Decide…

Knowing how to choose an AI development company looks straightforward on paper. In practice, it rarely is. Some businesses come in with a clear use case, clean data, and a defined scope. Others are still figuring out where AI fits and what problem they’re actually trying to solve. Some need a focused PoC to validate an idea before spending a cent on development. Others are ready to scale something that’s already working. The right vendor for one situation is often the wrong one for another.

What does help is talking to someone who’s seen enough projects to recognize the patterns. At Master of Code Global, we’ve worked with businesses at every stage of the AI journey – from first-time PoCs to enterprise-scale deployments across fintech, retail, healthcare, and more. We don’t lead with a solution. We start by understanding your situation, and from there we can tell you honestly whether we’re the right fit, what the path forward looks like, and what to watch out for along the way.

If you’re evaluating your options and want a straight conversation, we’re just a few clicks away.